Artificial intelligence will reshape how wars are fought, and the United States enters this era with genuine advantages. American companies build the most capable models in the world. US-based chip designers dominate the advanced semiconductor supply chain. Private investment in AI flows into American firms at a rate that dwarfs every other nation. These are real strengths, and they underpin a reasonable belief that the United States leads the global AI competition. The confidence borne of this belief, however, rests on an assumption that deserves scrutiny: that breakthroughs made in American labs translate into durable, exclusive military advantage. In previous technology competitions, the path from discovery to adversary replication was measured in years or decades. In AI, that timeline is compressed to months or even weeks, and this reality undermines this assumption.

The underlying reason for this compression is idea fluidity: The core algorithmic innovations that power AI capabilities diffuse rapidly and freely across borders through open publications, open-source model releases, and the global movement of AI researchers. When ideas cannot be hoarded, the factors that determine who holds advantage in AI shift from who discovers the breakthrough to who commands the resources to build on breakthroughs and field them fastest. Those factors—compute infrastructure, talent, and the organizational capacity to adopt AI at speed and scale—tell a more competitive story than the headline narrative of American dominance suggests. They also illuminate a path toward durable AI superiority for military applications.

AI and the Atom Bomb: Why This Technology Race is Different

In previous technology competitions, replication timelines were long—not because adversaries lacked the underlying ideas, but because the physical prerequisites for acting on them were scarce, controllable, and difficult to acquire. The physics of nuclear fission has been textbook knowledge for nearly a century, yet only nine nations have ever built a nuclear weapon. The ideas diffused completely, but the materials have not. Enriched uranium and precision manufacturing equipment can be physically denied, and these execution barriers are what keep the nuclear club small despite the availability of the underlying science.

AI follows the same basic logic: Ideas alone are insufficient without the compute and talent to act on them. However, the barriers to execution are fundamentally different in character. The materials of AI are commercially available, globally distributed, and expanding in supply. You cannot restrict access to GPUs (graphics processing units, a fundamental building block of computing power) the way you can interdict fissile material, and, as US export control policy has demonstrated, even targeted restrictions prove porous and politically ephemeral. Meanwhile, the ideas themselves transfer at a fidelity that has no precedent in previous technology races. Frontier labs do not merely publish general principles; they release detailed technical reports and training methodologies. Significant algorithmic ideas like the transformer, chain-of-thought reasoning, and reinforcement learning from human feedback are published openly, replicated quickly, and improved upon by a global community that treats foundational research as a public good. As AI researcher Sebastian Raschka has observed, we’ve reached a point where no organization will have exclusive access to the foundational technology that drives capabilities. The result is a competition in which both the knowledge and the means to act on it are more widely accessible than in any prior military-technological rivalry.

The evidence for this trend is both structural and empirical. AI research moves through open preprint servers like arXiv, where submissions in artificial intelligence alone grew from roughly 12,500 papers in 2021 to nearly 45,000 in 2025. Breakthroughs also diffuse through open-weight model releases that allow anyone to inspect, replicate, and build upon frontier work. As a result, open-weight models now lag state-of-the-art performance by just three months, and frontier capabilities become accessible on consumer-grade hardware within twelve. Algorithmic efficiency—the ability to achieve a given level of performance with less compute—is improving at roughly three times per year. These are precisely the kinds of gains that diffuse freely through publications and open-source initiatives.

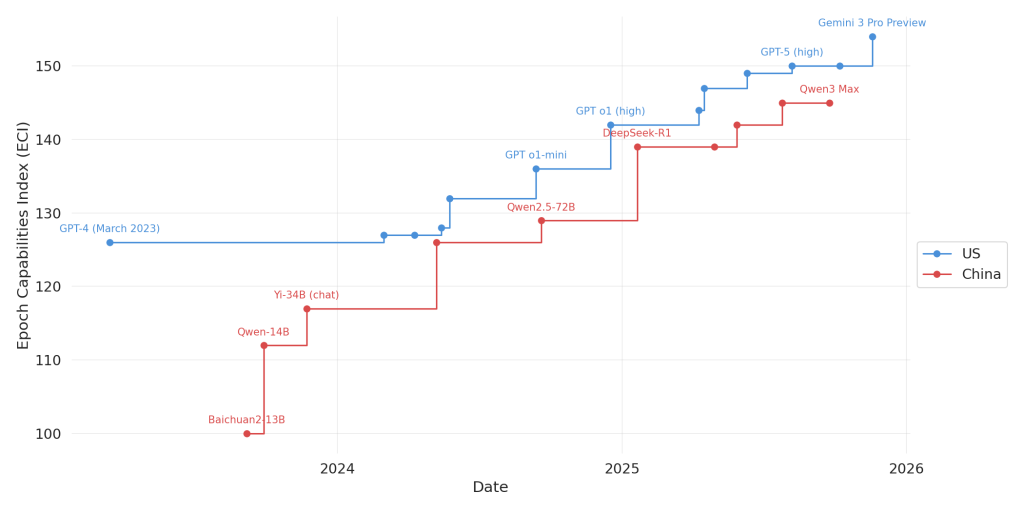

In this environment, the pattern of replication has played out repeatedly. When Meta’s LLaMA model leaked in March 2023, the open-source community produced instruction-tuned, quantized, and multimodal variants within weeks. In response, a Google engineer wrote in a leaked internal memo: “We have no moat, and neither does OpenAI.” DeepSeek’s R1 release in January 2025 demonstrated the dynamic at national scale: The Chinese lab took techniques originating in Western research, recombined them under severe compute constraints, and produced a model that rivaled the best American systems. Within months, other Chinese labs built on DeepSeek’s innovations to release their own competitive models, leading to the current landscape in which Alibaba, Moonshot, Z.ai, and others compete with America’s top labs.

When the algorithmic ideas that power AI capabilities cannot be monopolized, the competition shifts to implementation: Who has the physical infrastructure and human capital to act on ideas that are available to everyone, and who can move fastest from concept to fielded capability?

AI Compute at the Point of Need

Compute is the most tangible prerequisite for acting on AI breakthroughs—its role extends well beyond the initial training of frontier models. Operational AI systems require continuous inference at scale. Furthermore, the iterative experimentation that turns a generally capable model into a purpose-built tool for logistics optimization, target recognition, or decision support demands sustained access to large quantities of compute. The recipe may be free, but every organization still needs its own kitchen.

At the national level, the United States holds a commanding lead in installed infrastructure and investment volume. America possesses over four thousand data centers to China’s roughly four hundred, and American AI hyperscaler spending dwarfs analogous Chinese investment by a factor of five. Since October 2022, the United States has maintained export controls on advanced semiconductors, limiting China’s access to the hardware that enables efficient AI operations. But these advantages are less decisive than they initially appear. In December 2025, The White House permitted NVIDIA to sell its H200 chips to approved Chinese customers. At the time this policy change occurred, eighteen of the twenty largest GPU clusters in the world primarily used chips from that same generation. Even with restrictions on NVIDIA’s most advanced Blackwell architecture, the net effect is significant erosion of the compute advantage that export controls were designed to maintain. Meanwhile, China possesses a significant structural energy advantage. Its installed generation capacity is more than double that of the United States, and this gap is only growing as it adds new capacity fifteen times faster than America does. These advantages position China to sustain the exponentially growing power demands of successive AI generations in ways the American grid currently cannot.

The competition for compute at the national level is relevant to many military AI applications—many of our capabilities will no doubt be powered by underlying models that run in commercial datacenters owned by Amazon, Microsoft, and Google. However, a distinct subset of operational applications will rely on a pool of compute that isn’t served by commercial datacenters. Military AI must increasingly function at the edge: on ships, in aircraft, at forward operating bases, and on platforms operating in electromagnetically contested or communications-denied environments. Edge inference—running trained models where connectivity to the cloud is intermittent or nonexistent—demands a different hardware ecosystem, different power profiles, and different engineering trade-offs than the centralized compute that dominates the commercial landscape. A model that performs brilliantly in a Virginia data center is operationally irrelevant if it cannot run on the hardware available in the field.

Idea fluidity sharpens this problem. If algorithmic breakthroughs diffuse in weeks rather than years, then the military cannot afford long integration cycles to adapt each new generation of models to edge-capable hardware. The pace of commercial AI innovation means the military faces a continuous adaptation challenge. Every week a model sits in testing or integration is a week in which the underlying capability is proliferating to adversaries who may be more effective at pushing it to their own operational edge. The Department of Defense’s new directive to integrate commercial AI models within thirty days of public release reflects an awareness of this dynamic, but meeting that timeline requires compute infrastructure that is both powerful enough to be useful and flexible enough to accommodate rapid model turnover.

AI Talent and the Last Mile

The same logic applies to talent. Idea fluidity diminishes the value of researchers as originators of exclusive breakthroughs because a brilliant insight will appear on arXiv within months regardless of where it originated. But the people who take a generally available model and adapt it to a specific military problem are not subject to the same diffusion dynamics. Applied engineering and institutional integration are tacit, contextual, and organization-specific in ways that algorithmic research is not. The question, then, is not just who produces the most novel AI research, but who has the depth of talent to implement AI in ways that provide real operational value.

At the national level, the trends are not encouraging. China now produces nearly twice as many science and engineering PhD graduates as the United States, and the gap continues to widen. At the same time, the global distribution of top AI researchers is shifting. According to MacroPolo’s Global AI Talent Tracker, the share of top AI researchers working at US institutions fell from 59 percent in 2019 to 42 percent in 2022, while China’s share rose from 11 percent to 28 percent. Overall researcher mobility declined across the same period, suggesting more talent is staying in home countries rather than migrating to the United States. DeepSeek’s R1 release crystallized this trend: An analysis of its roughly two hundred authors found that nearly all were educated at Chinese universities, and almost half had studied exclusively in China. China’s domestic talent pipeline can now produce frontier-quality AI research without depending on the American university system.

But the military talent problem has an additional dimension that the national picture does not capture. The Department of Defense is not just competing with China for AI talent; it is competing with Amazon, Microsoft, and Google, among countless others. The engineers who can do the critical last-mile work of adapting a generally available model to a military problem are exactly the people the private sector pays six- and seven-figure salaries to attract. A recent department strategy memo acknowledges this reality, directing the use of special hiring and pay authorities and requiring every service and component to establish AI talent development plans.

The services are taking concrete steps to build this talent organically. The Army’s Artificial Intelligence Integration Center, collocated with Carnegie Mellon University, trains operationally experienced officers in machine learning and data science and then pushes them back into the force as dual-domain experts—personnel who combine military knowledge with technical capability. In December 2025, the Army went further, establishing a formal AI and machine learning officer career track to give these specialists a professional home. The underlying theory is sound, and I had the opportunity to witness its efficacy in practice as a member of the first cohort of Artificial Intelligence Scholars. Creating an ideal solution requires the simultaneous maintenance of two mental models: one of the operational problem and the second of the technical approach to resolving it. At AI2C, we were frequently able to instantiate both with high resolution. This experience is difficult to replicate for software engineers with no operational grounding.

Yet the Army is systematically undermining its own investment. As Captain Nathaniel Fairbank documented in a recent MWI article, the AI Scholars program produces worse promotion outcomes than the force at large, despite drawing some of the service’s most academically competitive officers. The structural cause is a misalignment between the program’s four-year timeline and the Army’s career milestones: Officers return from graduate school and utilization tours to find that the window for completing key developmental assignments and professional military education has effectively closed. The situation is reminiscent of that confronting the Army’s elite cyber professionals, who, finding that continued service in purely technical roles is not supported by the promotion system, choose to leave rather than venture out from behind the keyboard.

In a technical context defined by idea fluidity, this is not merely a personnel management failure—it is a strategic one. If algorithmic breakthroughs diffuse in weeks, the military’s AI advantage cannot rest on access to better algorithms. Every officer who is passed over for promotion, forced into a career-field change, or driven to separate from military service represents a forfeited implementation advantage, and the private sector is more than happy to absorb the Army’s losses. China does not need to poach American AI talent if the American military cannot retain its own.

Adoption Speed as the Decisive Variable

If ideas flow freely and the material prerequisites for acting on them are increasingly contested, then the competition ultimately reduces to organizational speed: Who moves fastest from available capability to fielded system? The Department of Defense strategy memo puts this plainly with its directive to “weaponize learning speed, and measure and manage cycle time and adoption rates as decisive variables in the AI era.”

China has been building toward this for years. As Shannon Vaughn, a fellow at the Foreign Policy Research Institute, argues, China’s military AI progress is better understood as an adoption contest than a technology one. Beijing’s military-civil fusion apparatus is designed to minimize the friction between civilian innovation and military fielding. A capability that begins as a commercial technique can move through procurement into military use, then scale through follow-on contracting and doctrinal experimentation. China’s structural advantage is not that it produces better AI. By most measures, it does not. Its advantage is that its institutional architecture is purpose-built to compress the timeline from innovation to operational capability. The Department of Defense strategy, with its pace-setting projects, thirty-day model integration directive, and barrier removal board, represents the most explicit American acknowledgment to date that the United States must compete on this axis or risk losing an AI race it currently leads on technical merit.

Advantage as Tempo

The United States cannot treat AI advantage as a stockpile—a lead built on breakthrough discoveries that adversaries will take years to replicate. Idea fluidity renders this paradigm obsolete. The algorithmic innovations that power AI capabilities diffuse too rapidly, too freely, and at too high a fidelity for any nation to monopolize them. What remains are the factors that determine who can act on those innovations fastest: compute infrastructure that extends to the operational edge, technical talent that the military can develop and retain, and organizational machinery that compresses the cycle from commercial breakthrough to fielded capability. These are the components of military AI tempo, and tempo is what determines advantage in the AI era. The United States possesses genuine strengths in each of these areas, but none of them are assured, and in several the current trends favor China. The race will not be won by the nation that makes the most discoveries. It will be won by the nation that fields them fastest.

Captain Kyle Dotterrer is a research scientist at the Army Cyber Institute at West Point. Previously, at the Army Artificial Intelligence Integration Center, he served as the technical lead for the Artificial Intelligence Development Environment project—a platform designed to bring data science and machine learning capabilities to both enterprise and tactical environments.

The views expressed are those of the author and do not reflect the official position of the United States Military Academy, Department of the Army, or Department of Defense.

Image credit: Micah Wilson, US Army